Course guidelines emphasize prerequisites like Calculus I & II, preparing students for rigorous exploration of functions extending beyond single variables, as seen in modern textbooks․

What is Multivariable Calculus?

Multivariable calculus extends the foundational principles of single-variable calculus – limits, derivatives, and integrals – to functions with multiple input variables․ Unlike traditional calculus dealing with functions of a single variable (like time), this branch explores functions dependent on several variables, such as spatial coordinates (x, y, z)․

Why Study Multivariable and Vector Calculus?

Studying multivariable and vector calculus unlocks the ability to model and analyze complex real-world phenomena inaccessible with single-variable techniques․ It’s foundational for disciplines like physics, where understanding vector fields (gravity, electromagnetism) is paramount, and engineering, where optimization problems with constraints are commonplace․

Furthermore, it’s essential for computer graphics, machine learning, and data science, enabling the manipulation and analysis of high-dimensional data․ Course guidelines highlight its reliance on prior calculus knowledge․ Resources like Active Calculus provide interactive learning, while textbooks offer a robust theoretical framework․ Mastering these concepts provides a powerful toolkit for solving advanced problems․

Functions of Several Variables

Exploring functions with multiple inputs expands mathematical modeling capabilities, moving beyond single-variable limitations and enabling analysis of complex relationships and systems․

Domain and Range of Multivariable Functions

Defining the domain for functions of several variables requires considering restrictions on all input variables simultaneously, unlike single-variable functions․ This involves identifying any values that would lead to undefined expressions, such as division by zero or taking the square root of a negative number․

The range represents the set of all possible output values the function can produce․ Determining the range can be significantly more challenging than finding the domain, often requiring a deep understanding of the function’s behavior and potentially utilizing optimization techniques․

Visualizing these concepts is crucial; the domain often corresponds to a region in multi-dimensional space, while the range represents a subset of another multi-dimensional space․ Careful consideration of these aspects is fundamental for accurate mathematical modeling․

Partial Derivatives

Partial derivatives measure the rate of change of a multivariable function with respect to one variable, holding all others constant․ This contrasts with ordinary derivatives, which deal with single-variable functions․ Calculating these involves treating all variables except the one being differentiated as constants․

Understanding partial derivatives is vital for optimization problems, allowing us to find maximum and minimum values of functions with multiple inputs․ These derivatives reveal the function’s sensitivity to changes in each individual variable․

Applications extend to modeling real-world phenomena where multiple factors influence a given outcome, providing insights into how each factor contributes to the overall result․ They are a cornerstone of advanced calculus․

Calculating Partial Derivatives

To calculate a partial derivative, treat all variables except the one you’re differentiating as constants․ Apply the standard rules of differentiation from single-variable calculus to this simplified expression․ For example, to find ∂f/∂x, consider y as a constant․

Notation is key: ∂f/∂x represents the partial derivative of f with respect to x․ This process yields a new function that describes the rate of change along that specific variable․

Repeated partial derivatives are also possible, denoted as ∂²f/∂x² or ∂²f/∂x∂y, offering insights into the function’s curvature and behavior in multiple dimensions․ Mastering these calculations is fundamental․

Applications of Partial Derivatives: Optimization

Partial derivatives are crucial for optimization problems involving functions of several variables․ Finding critical points – where all partial derivatives equal zero – identifies potential maxima, minima, or saddle points․

The second partial derivative test helps classify these critical points․ Examining the Hessian matrix (containing second partial derivatives) determines the nature of the point, revealing whether it’s a local maximum, minimum, or a saddle point․

Real-world applications abound, from maximizing profit functions in economics to minimizing material usage in engineering, showcasing the power of multivariable calculus in solving complex problems․

Gradient Vector

The gradient vector, a fundamental concept in multivariable calculus, represents the direction of the steepest ascent of a scalar function․ It’s composed of all the function’s first-order partial derivatives․

Mathematically, for a function f(x, y), the gradient is ∇f = <∂f/∂x, ∂f/∂y>․ This vector points in the direction where the function increases most rapidly from a given point․

Understanding the gradient is essential for optimization, directional derivatives, and analyzing vector fields, providing a powerful tool for exploring functions of multiple variables and their behavior․

Interpretation of the Gradient Vector

The gradient vector’s magnitude signifies the rate of change – the steepness – in the direction it points․ A larger magnitude indicates a steeper slope, meaning the function is changing rapidly․

Geometrically, the gradient is orthogonal (perpendicular) to the level curves or surfaces of the function․ Imagine contour lines on a map; the gradient points uphill, perpendicular to those lines․

This interpretation is crucial for understanding how functions behave in multiple dimensions, enabling us to find maximum and minimum values, and to analyze the function’s overall shape and behavior․

Directional Derivatives

Directional derivatives measure the rate of change of a multivariable function in a specific direction, unlike partial derivatives which focus on axes-aligned directions․

They are calculated using the dot product of the gradient vector and a unit vector pointing in the desired direction․ This provides a scalar value representing the slope in that direction․

Understanding directional derivatives is vital for optimization problems, determining the maximum rate of increase, and analyzing how a function changes as you move along a particular path․ It extends the concept of slope to multiple dimensions, offering a powerful analytical tool․

Vector Fields

Vector fields assign a vector to each point in space, representing quantities like velocity or force, crucial for modeling physical phenomena and line integrals․

Definition and Examples of Vector Fields

A vector field is a function that assigns a vector to each point in a two-dimensional or three-dimensional space․ Formally, it’s a function F: ℝn → ℝn․ Imagine wind velocity at every location on a map – that’s a vector field! Each point gets a vector indicating wind direction and speed․

Examples abound in physics and engineering․ Gravitational fields, electromagnetic fields, and fluid flow are all naturally described by vector fields․ Consider a simple 2D field F(x, y) =

Line Integrals

Line integrals extend the concept of definite integrals to functions defined along curves․ Instead of integrating over an interval on the x-axis, we integrate along a path in two or three dimensions․ There are two primary types: scalar and vector line integrals․

Scalar line integrals integrate a scalar function along a curve, often representing work done by a variable force․ Vector line integrals integrate a vector field along a curve, calculating the work done by a constant force․ These integrals are crucial for understanding concepts like work, circulation, and flux․ They build upon the foundation of multivariable calculus, offering powerful tools for solving complex problems․

Scalar Line Integrals

Scalar line integrals involve integrating a scalar function, f, along a curve C․ This is achieved by parameterizing the curve with a vector function r(t), and then expressing the integral in terms of t․ Essentially, we’re summing up the values of the function multiplied by infinitesimal arc lengths along the curve․

Computationally, this involves substituting r(t) into f, multiplying by the magnitude of the derivative of r(t) (representing arc length), and integrating with respect to t over the parameter interval․ These integrals are fundamental for calculating quantities like mass, work done by a variable force, and the length of a curve․

Vector Line Integrals

Vector line integrals extend the concept to integrating a vector field F along a curve C․ Unlike scalar integrals, we integrate the dot product of F with the tangent vector T(t) (or equivalently, r'(t)) along the curve․ This represents the component of the vector field acting in the direction of the curve’s tangent․

Computationally, this involves evaluating F(r(t)) ⋅ r'(t), and integrating with respect to t over the parameter interval․ These integrals are crucial for calculating work done by a force field, circulation, and flux․ Understanding vector line integrals is essential for grasping concepts like conservative vector fields and path independence․

Conservative Vector Fields

Conservative vector fields are a special class where the work done moving an object between two points is independent of the path taken – a key concept in physics and engineering․ Mathematically, a vector field F is conservative if it can be expressed as the gradient of a scalar potential function, f, meaning F = ∇f․

Identifying conservative fields often involves checking if the field is path-independent, or verifying if its curl is zero․ Finding the potential function f involves integrating the components of F․ These fields have significant implications for simplifying calculations and understanding energy conservation principles within vector calculus․

Potential Functions

Potential functions are scalar fields intrinsically linked to conservative vector fields․ If a vector field F is conservative, a scalar function f exists such that F equals the gradient of f (∇f)․ This f is the potential function, representing potential energy in physical systems․

Finding potential functions involves integrating the components of the vector field․ Crucially, the existence of a potential function confirms path independence in line integrals․ These functions are vital for simplifying complex calculations and provide a powerful tool for analyzing physical phenomena governed by conservative forces, offering a deeper understanding of vector calculus․

Path Independence

Path independence is a cornerstone concept in vector calculus, directly tied to conservative vector fields․ For a conservative field, the work done moving an object between two points is independent of the path taken – only the endpoints matter․ This simplifies line integral calculations significantly․

Mathematically, path independence implies that the line integral of a conservative field is zero around any closed loop․ Potential functions guarantee path independence; if a potential function exists, the field is conservative․ Understanding this property is crucial for solving problems in physics and engineering, streamlining complex analyses․

Multiple Integrals

Expanding beyond single variables, multiple integrals – double and triple – enable calculations of volumes, masses, and other properties over regions in two and three dimensions․

Double Integrals

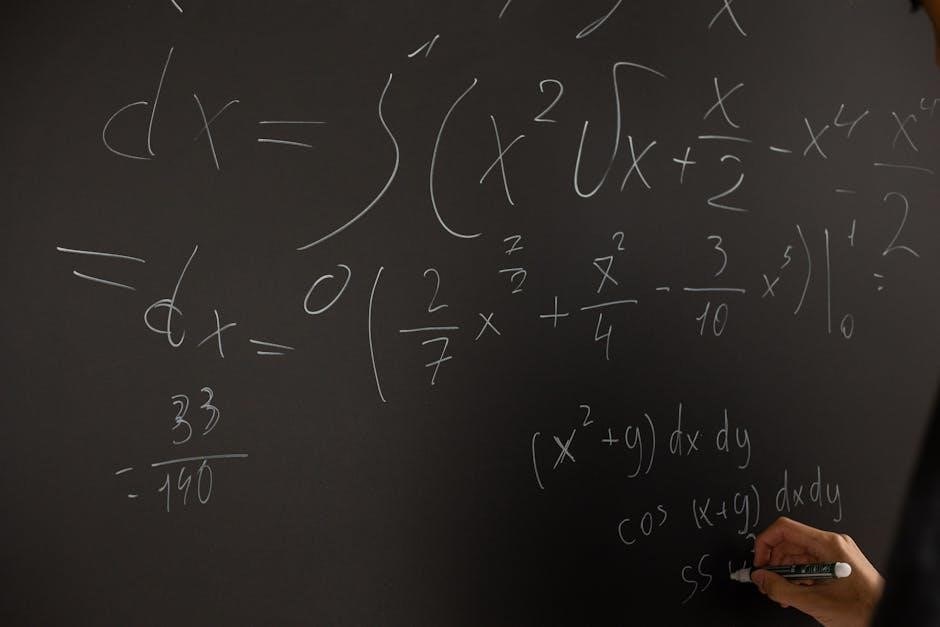

Double integrals represent the volume under a surface, extending the concept of the definite integral to functions of two variables․ They are fundamentally calculated as iterated integrals, meaning one integral is evaluated while treating the other variable as constant, then the process is repeated․

This technique allows us to break down complex calculations into manageable steps․ Applications are vast, including determining the area of a region in the xy-plane – achieved by integrating the constant function ‘1’ over that region․ Furthermore, double integrals are crucial for calculating the mass of a lamina with varying density, or the center of mass of a two-dimensional object․

Understanding the order of integration (dx dy or dy dx) is vital, as it can significantly impact the complexity of the calculation․ Careful consideration of the region of integration is also paramount for accurate results․

Iterated Integrals

Iterated integrals are the cornerstone of evaluating double and triple integrals, breaking down complex multi-dimensional integration into a sequence of single-variable integrals․ This process involves integrating one variable at a time, treating the others as constants – a powerful simplification technique․

The order of integration matters significantly; switching between dx dy and dy dx can alter the difficulty of the calculation․ Determining the correct limits of integration for each variable is crucial, often defined by the boundaries of the region over which integration occurs․

Successfully applying iterated integrals requires a solid understanding of single-variable integration techniques and careful attention to the limits, ensuring accurate computation of volumes, areas, and other multi-variable quantities․

Applications of Double Integrals: Area and Volume

Double integrals extend beyond mere calculation, providing powerful tools for determining geometric properties in two dimensions․ Specifically, they elegantly compute the area of regions defined by functions and boundaries within the xy-plane․

Furthermore, double integrals are instrumental in calculating volumes․ By integrating the height function (z = f(x, y)) over a given region, we can precisely determine the volume beneath the surface and above the xy-plane․

These applications demonstrate the practical utility of multivariable calculus, bridging theoretical concepts to real-world geometric problems, and solidifying the importance of iterated integration techniques․

Triple Integrals

Triple integrals represent the natural extension of double integrals into three dimensions, enabling the calculation of properties within a three-dimensional region․ This involves integrating a function over a solid volume, expanding the scope of analysis significantly․

Crucially, these integrals can be evaluated using iterated integrals in various coordinate systems – Cartesian, cylindrical, and spherical․ The choice of coordinate system depends on the geometry of the region to simplify the integration process․

Mastering triple integrals is vital for advanced applications, providing a robust framework for determining volumes, masses, and other physical quantities within complex three-dimensional spaces․

Iterated Integrals in Cartesian, Cylindrical, and Spherical Coordinates

Evaluating triple integrals often necessitates employing iterated integrals, a technique involving sequential integration with respect to each variable․ The choice of coordinate system – Cartesian, cylindrical, or spherical – profoundly impacts the integral’s complexity․

Cartesian coordinates are straightforward but can become cumbersome for regions with cylindrical or spherical symmetry․ Cylindrical coordinates simplify integrals over cylinders and cones, while spherical coordinates excel with spheres and cones․

Strategic selection of the appropriate coordinate system dramatically reduces computational effort, transforming challenging integrals into manageable ones, ultimately yielding accurate results for volume and mass calculations․

Applications of Triple Integrals: Volume and Mass

Triple integrals are powerful tools extending beyond theoretical exercises, finding practical application in determining the volume of complex three-dimensional regions․ By integrating the constant function ‘1’ over the region, we directly compute its volume, a fundamental geometric property․

Furthermore, triple integrals enable the calculation of mass when the density function is known․ Integrating the density function over the volume yields the total mass, providing crucial insights into physical systems․

These applications demonstrate the versatility of multivariable calculus, bridging mathematical concepts to real-world scenarios in engineering, physics, and other scientific disciplines, offering precise quantitative analysis․

Vector Calculus Theorems

Fundamental theorems like Green’s, Stokes’, and the Divergence Theorem establish crucial relationships between vector fields and integrals, simplifying complex calculations significantly․

Green’s Theorem

Green’s Theorem elegantly connects a line integral around a simple closed curve C to a double integral over the plane region D bounded by C․ This powerful relationship transforms calculations, allowing us to evaluate line integrals by converting them into more manageable double integrals – and vice versa․

Specifically, the theorem relates the integral of a vector field’s components around C to the integral of the curl of the field over D․ Understanding this connection is vital for solving problems in fluid dynamics and electromagnetism, where line integrals often represent work or circulation․

Effectively, Green’s Theorem provides a bridge between different integral representations, offering flexibility and simplifying complex computations within planar regions, a cornerstone of vector calculus applications․

Stokes’ Theorem

Stokes’ Theorem extends Green’s Theorem to three dimensions, establishing a profound link between a line integral around a closed curve C and a surface integral over a surface S whose boundary is C․ This generalization is crucial for understanding phenomena involving rotation and circulation in three-dimensional space․

Mathematically, the theorem equates the line integral of a vector field along C to the surface integral of the curl of the field over S․ This allows for the conversion between these integral forms, simplifying calculations in scenarios like analyzing fluid flow or electromagnetic fields․

Essentially, Stokes’ Theorem provides a powerful tool for relating local properties (curl) to global behavior (circulation), offering deep insights into vector field dynamics․

Divergence Theorem

The Divergence Theorem, also known as Gauss’s Theorem, provides a fundamental relationship between a volume integral over a solid region E and a surface integral over the boundary surface S enclosing E․ It’s a cornerstone of vector calculus, offering a powerful tool for simplifying calculations․

Specifically, the theorem states that the flux of a vector field across a closed surface is equal to the divergence of the field integrated over the volume enclosed by that surface․ This connection reveals how much a vector field “spreads out” or “converges” within a region․

Applications span diverse fields, including fluid dynamics (analyzing sources and sinks) and electromagnetism (calculating electric flux)․

Applications of Multivariable and Vector Calculus

Real-world problems in fluid dynamics, electromagnetism, and optimization are elegantly solved using these advanced calculus techniques, demonstrating their broad applicability and power․

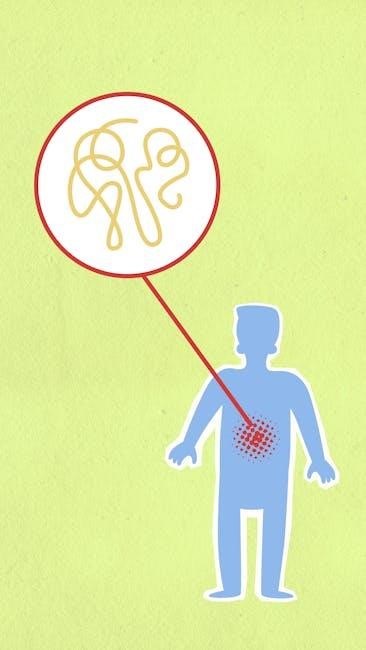

Optimization Problems with Constraints

Multivariable calculus provides powerful tools for solving optimization problems where variables are not independent, but are linked by constraints․ These scenarios frequently arise in engineering, economics, and physics, demanding techniques beyond single-variable calculus․

Lagrange multipliers emerge as a central method, allowing us to find the extrema of a function subject to equality constraints․ This involves formulating a new function, the Lagrangian, incorporating both the objective function and the constraints․

Finding critical points of the Lagrangian then reveals potential maximum and minimum values, which must be carefully evaluated to determine the optimal solution within the defined constraints․ This approach extends to problems with multiple constraints, increasing complexity but maintaining the core principle․

Fluid Dynamics

Multivariable and vector calculus are fundamental to understanding fluid dynamics, describing the motion of liquids and gases․ Vector fields represent the velocity of the fluid at each point in space, enabling analysis of flow patterns and forces․

Concepts like divergence and curl become crucial; divergence indicates the rate of fluid expansion or compression, while curl describes the fluid’s rotation․ These are calculated using partial derivatives and vector operations․

Navier-Stokes equations, a cornerstone of fluid dynamics, are partial differential equations derived using these calculus principles․ Solving these equations, often computationally, predicts fluid behavior under various conditions, from airflow over wings to ocean currents․

Electromagnetism

Multivariable calculus provides the mathematical framework for describing electromagnetic fields․ Electric and magnetic fields are represented as vector fields, where the strength and direction of the field vary in space․

Maxwell’s equations, the foundation of classical electromagnetism, are expressed using vector calculus operators like divergence and curl․ These equations relate electric and magnetic fields to their sources – charges and currents․

Concepts like potential functions, derived from conservative vector fields, simplify the analysis of electric potentials․ Line and surface integrals are used to calculate electric and magnetic fluxes, essential for understanding electromagnetic interactions and wave propagation․